Video Presentation

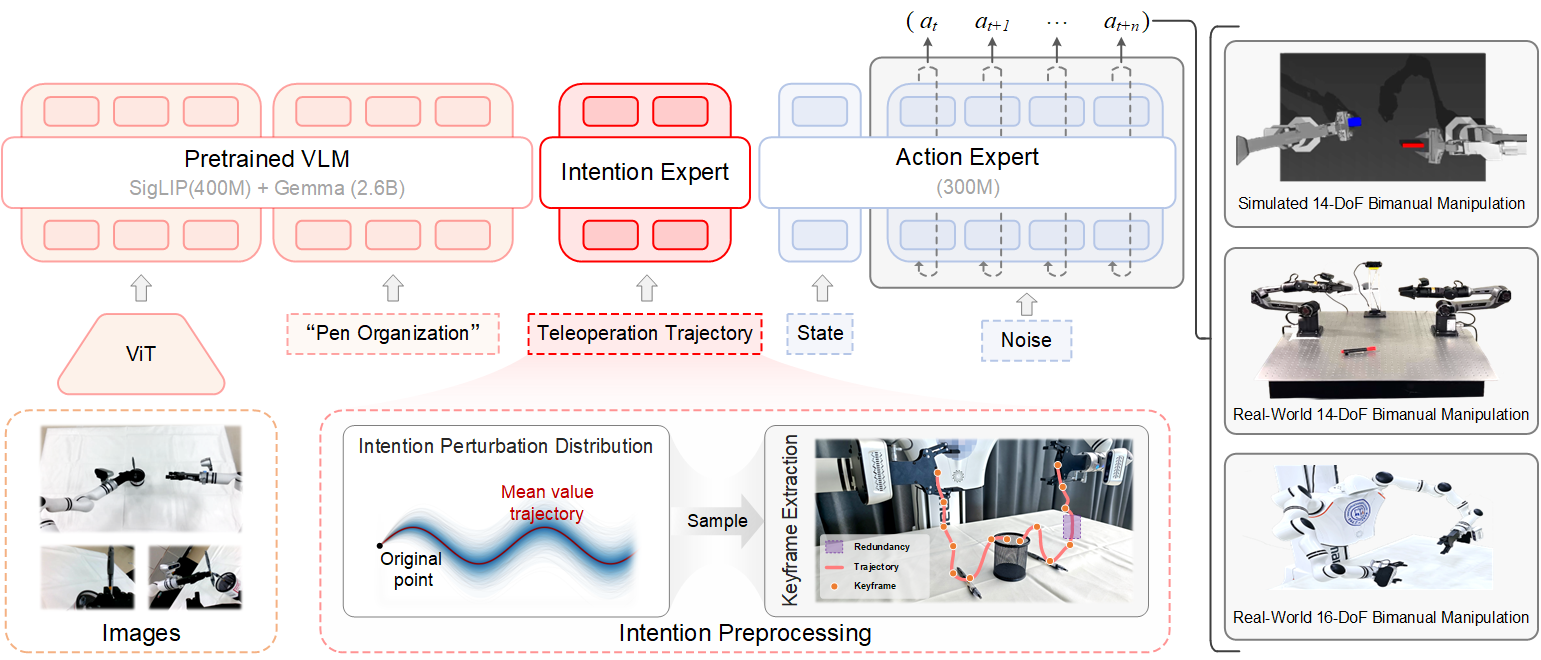

Method

Intention Preprocessing

Models intent uncertainty by constructing perturbation distributions via stochastic noise injection and extracting keyframes from expert trajectories.

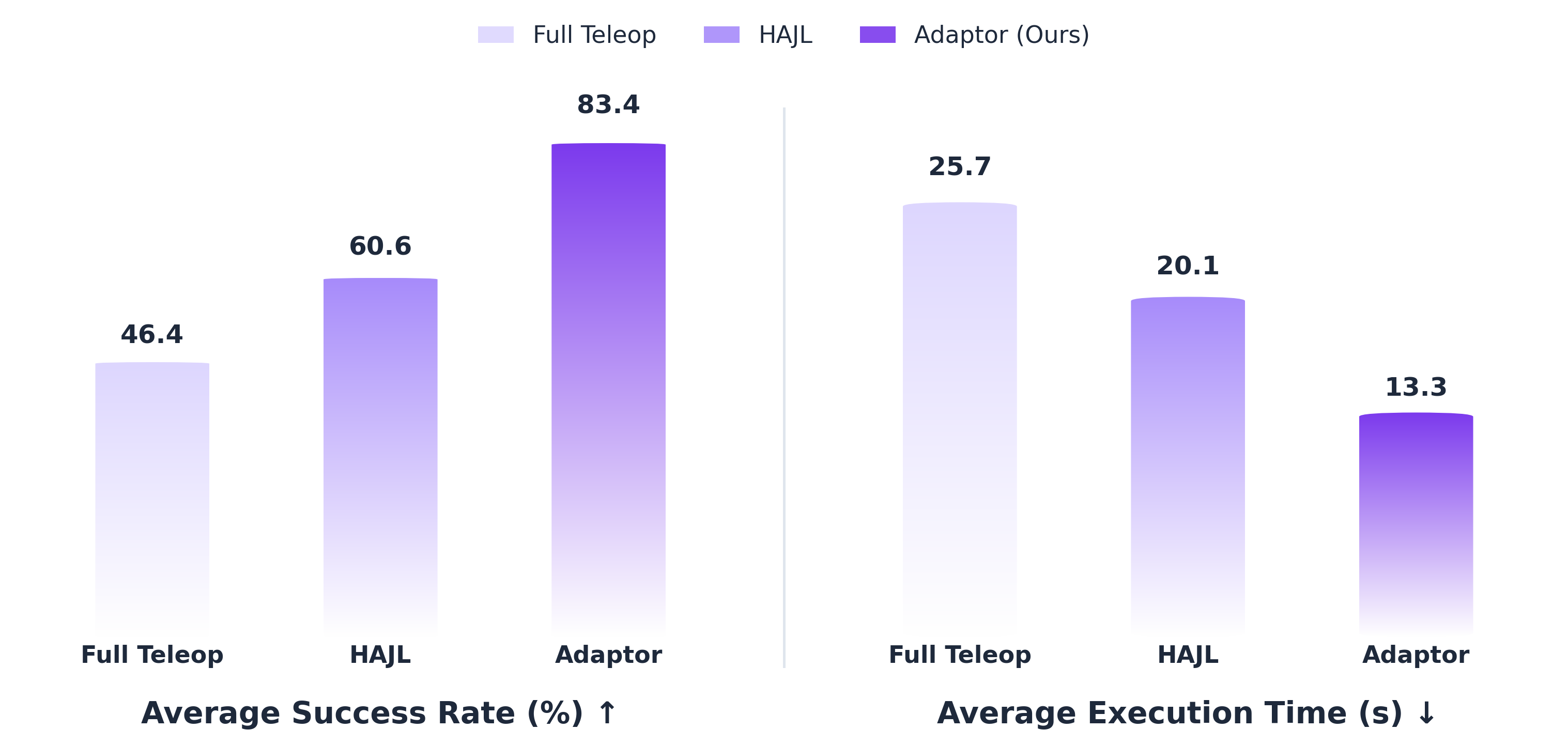

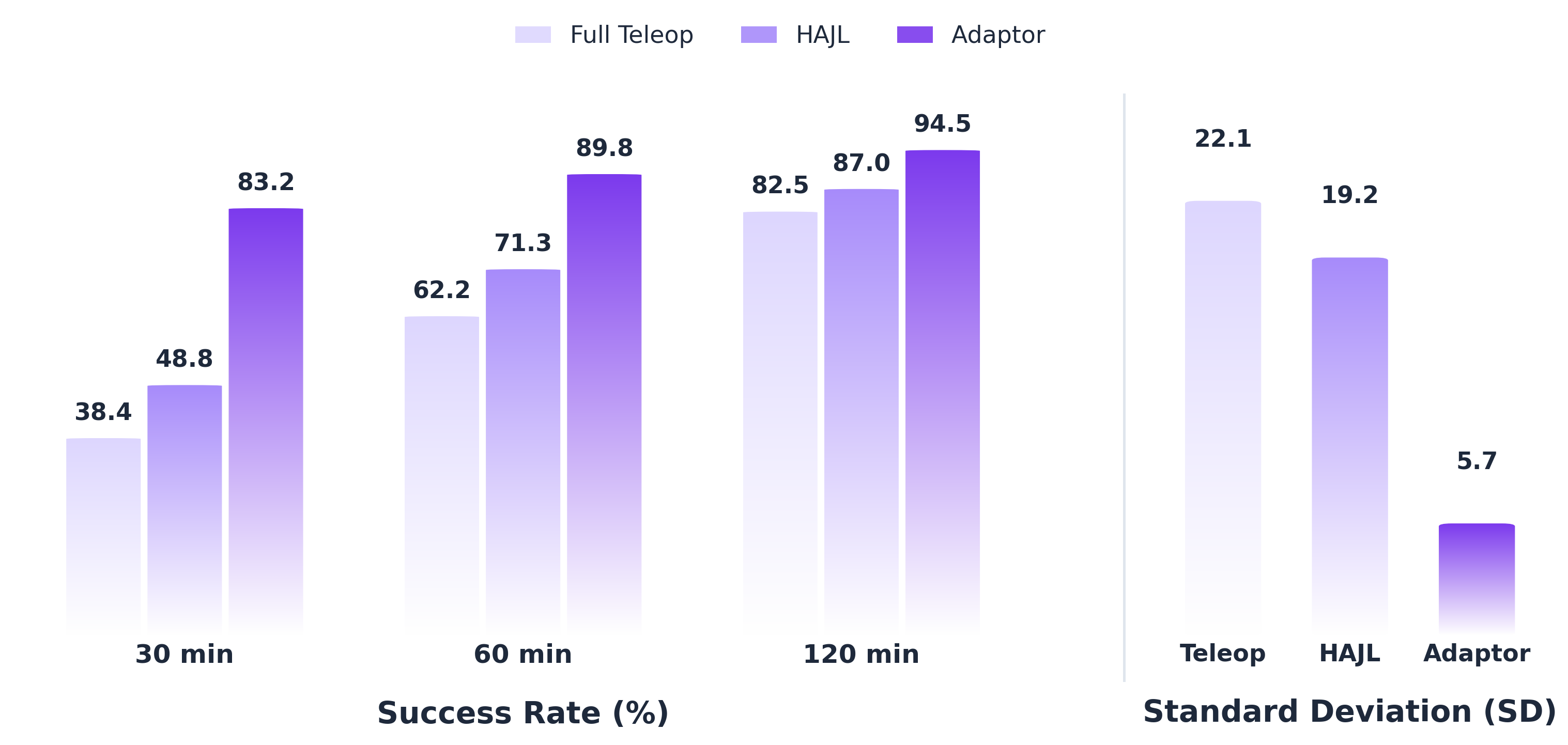

Policy Learning

Leverages a tripartite expert architecture: the VLM Expert encodes environmental context, the Intention Expert fuses semantics with trajectory guidance, and the Action Expert generates precise controls.